Advanced Micro Devices (AMD) reported its first-quarter financial results for the period ended March 2026, delivering a performance that exceeded Wall Street’s expectations on both the top and bottom lines. The semiconductor giant’s latest earnings report underscores a massive shift in the computing landscape, driven by the insatiable demand for artificial intelligence infrastructure. As enterprises and hyperscalers transition from traditional server architectures to AI-centric data centers, AMD has emerged as a primary beneficiary, reporting a 38% year-over-year increase in total revenue to $7.44 billion. The results were bolstered by a historic surge in data center sales, which climbed 57% to reach $5.8 billion, reflecting the successful ramp-up of the company’s Instinct GPU accelerators and EPYC server processors.

For the upcoming second quarter, AMD issued robust guidance that further fueled investor optimism. The company expects revenue to reach approximately $11.2 billion, a figure that significantly outpaces the $10.52 billion consensus estimate previously set by analysts according to LSEG data. This optimistic outlook suggests that the supply chain constraints that have plagued the industry are beginning to ease, or at least that AMD has secured sufficient capacity to meet its aggressive growth targets. The company’s stock has responded in kind, continuing a remarkable trajectory that has seen its valuation more than triple over the past twelve months, including a 66% gain in the first four months of 2026 alone.

The Data Center Transformation and the CPU Renaissance

The cornerstone of AMD’s first-quarter success was its Data Center segment. While the broader technology market has focused heavily on the rivalry between AMD and Nvidia in the graphics processing unit (GPU) space, AMD’s dual-threat capability in both GPUs and central processing units (CPUs) has proven to be a strategic advantage. For years, the industry narrative suggested that GPUs would eventually marginalize CPUs in the AI era. However, the emergence of "agentic AI"—autonomous AI systems capable of complex reasoning and multi-step task execution—has sparked what industry analysts are calling a "major renaissance" for high-performance CPUs.

Agentic AI workloads require more than just the raw parallel processing power of a GPU; they demand the sophisticated logic, branching capabilities, and low-latency response times that modern x86 CPUs provide. AMD’s EPYC processor line has captured significant market share from incumbents, offering superior energy efficiency and core density. This shift has allowed AMD to maintain a dominant position in general-purpose computing while simultaneously scaling its AI-specific hardware. The synergy between the two product lines has allowed AMD to offer integrated solutions that appeal to cloud service providers looking to optimize their total cost of ownership.

Strategic Alliance: The AMD and Intel x86 Partnership

One of the most significant developments of the quarter was the unexpected announcement of a strategic partnership between AMD and its long-time rival, Intel. The two companies, which have competed fiercely for decades, announced they would collaborate on a new instruction set for x86 CPUs. This move is widely seen as a defensive and offensive maneuver against the rising influence of ARM-based architectures in the data center.

The new feature, dubbed AI Compute Extensions, is designed to drastically improve the performance of x86 chips in AI environments. According to technical specifications released by the companies, the extensions aim to increase compute density by 16 times while simultaneously improving energy efficiency. By standardizing these extensions across both AMD and Intel platforms, the two companies hope to ensure that the vast ecosystem of x86 software remains the preferred choice for AI developers. This collaboration represents a fundamental shift in industry dynamics, prioritizing the health of the x86 ecosystem over individual competitive advantages in the face of a common threat from alternative architectures.

Product Innovation: The Helios Rack-Scale System

Looking ahead to the latter half of 2026, AMD is preparing to enter a new market segment with the launch of its first full rack-scale system for AI data centers, named Helios. Historically, AMD has focused on selling individual components—chips and accelerators—to original equipment manufacturers (OEMs). With Helios, AMD is moving up the value chain to provide fully integrated, liquid-cooled server racks designed specifically for massive AI model training and inference.

The Helios system is positioned as a direct competitor to Nvidia’s high-end Grace Blackwell and Vera Rubin systems. With price points for these integrated systems often exceeding $3 million per rack, the move into systems integration represents a significant revenue opportunity for AMD. Early interest in Helios has been exceptionally strong, with industry leaders OpenAI and Meta already signing up for initial shipments. For these "hyperscalers," AMD’s entry into the rack-scale market provides a vital second source of high-end compute, reducing their dependence on Nvidia and providing leverage in pricing negotiations.

Macroeconomic Headwinds and Supply Chain Challenges

Despite the record-breaking financial performance, the semiconductor industry continues to navigate a complex and volatile global environment. A primary concern for AMD and its peers is the ongoing global memory shortage. High-bandwidth memory (HBM), essential for AI GPUs, remains in short supply as manufacturing capacity struggles to keep pace with demand. This scarcity has led to a "frenzy" in the memory sector, exemplified by Micron Technology’s stock performance. Micron’s shares have surged over 700% in the past year, pushing its market capitalization beyond the $700 billion mark as it becomes a critical gatekeeper for AI hardware production.

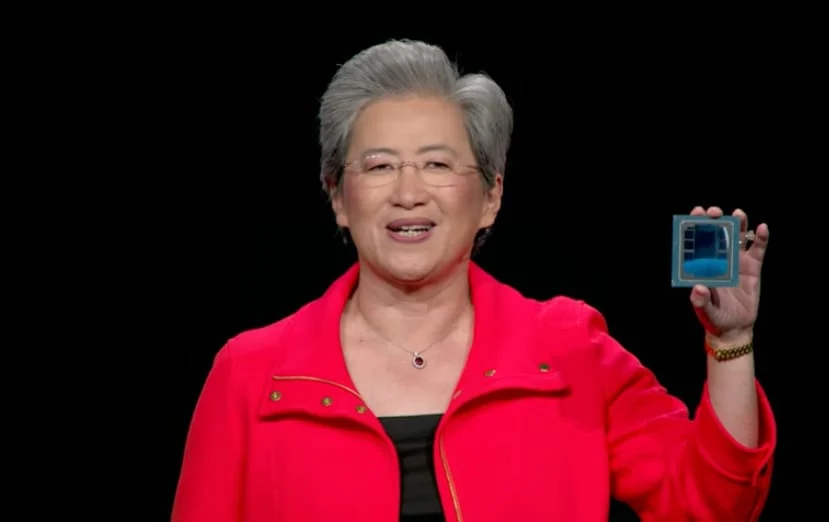

Furthermore, the industry is grappling with capacity constraints in advanced packaging. Technologies such as Chip on Wafer on Substrate (CoWoS) have become the latest bottleneck in the AI supply chain. As chips become more complex and modular, the ability to package them efficiently is as important as the ability to fabricate the silicon itself. AMD CEO Lisa Su has noted that while the company is working closely with manufacturing partners like TSMC, the "advanced packaging moat" remains a significant challenge for the entire sector.

Geopolitical instability has also added a layer of risk to the global supply chain. The ongoing conflict in Iran has disrupted key shipping routes and impacted the availability of certain raw materials used in semiconductor manufacturing. These supply chain shocks have contributed to a volatile pricing environment, forcing companies to manage their inventories with extreme precision to avoid production halts.

Competitive Landscape and Market Implications

The broader semiconductor sector is currently experiencing a period of unprecedented growth and realignment. Intel, once seen as a laggard in the AI race, recently reported its best month in history, with its stock more than doubling after Q1 results exceeded analyst projections. The market appears to be shifting away from a "winner-takes-all" mentality centered solely on Nvidia, toward a recognition that the AI opportunity is vast enough to support multiple multi-billion-dollar players.

AMD’s ability to position itself as the primary alternative to Nvidia has been central to its valuation surge. While Nvidia currently holds a commanding lead in software ecosystems (notably through its CUDA platform), AMD’s commitment to open-source software and its "ROCm" platform has begun to gain traction among developers who favor flexibility and portability. The adoption of AMD hardware by OpenAI is a particularly strong endorsement, suggesting that the software barriers to entry are becoming more permeable as the industry matures.

Chronology of Key Events Leading to Q1 2026 Results

The path to AMD’s current success can be traced through several pivotal moments over the last eighteen months:

- October 2024: AMD announces a landmark deal with OpenAI to provide Instinct series GPUs for next-generation model training, signaling the first major crack in Nvidia’s monopoly.

- February 2025: Meta expands its partnership with AMD, committing to use 6 gigawatts of AMD-powered GPU capacity for its global data center footprint.

- July 2025: AMD unveils its "Advancing AI" vision, shifting the company’s internal R&D focus entirely toward AI-integrated silicon across its laptop, desktop, and server divisions.

- January 2026: The x86 alliance with Intel is formalized, aimed at countering the growth of ARM-based custom silicon developed by Amazon and Google.

- March 2026: AMD closes the first quarter with record data center revenue, setting the stage for the launch of the Helios system.

Conclusion and Future Outlook

As AMD moves into the remainder of 2026, the company’s trajectory appears firmly tied to the scaling of AI infrastructure. The transition from experimental AI to "agentic" and "production-grade" AI requires a level of compute density and energy efficiency that only a few companies in the world can provide. By leveraging its expertise in both CPU and GPU architecture, and by moving into integrated systems with Helios, AMD has diversified its revenue streams and solidified its role as a cornerstone of the modern digital economy.

However, the road ahead is not without obstacles. The reliance on advanced packaging and the vulnerability of global supply chains to geopolitical conflict remain persistent risks. Additionally, as Nvidia prepares to ship its Vera Rubin architecture, the competitive pressure to innovate will only intensify. For now, AMD’s first-quarter results provide a clear signal to the market: the AI revolution is not just a single-company phenomenon, but a structural shift that is lifting the entire semiconductor industry to new heights. Investors and industry observers alike will be watching closely to see if AMD can maintain its momentum as the "Helios" era begins later this year.